Qvive Editor: According to a former Google exec, AI is more likely to disrupt the world in the next few years than climate change.

In recent years, the rise of Artificial Intelligence (AI) has been nothing short of remarkable. From self-driving cars to virtual assistants like Siri and Alexa, AI has become an integral part of our daily lives. However, with this rapid advancement comes the looming question: is AI a threat to humanity?

Despite its numerous advantages, the rise of AI has raised ethical concerns among experts and the general public. One of the primary concerns is the potential impact of AI on the job market. As AI technology becomes more advanced, there is a fear that it could replace human workers, leading to widespread unemployment.

Goldman Sachs Predicts 300 Million Jobs Will Be Lost Or Degraded By Artificial Intelligence

In a 2019 Wells FargoWFC+0.1% [1] study, the bank concluded that robots would eliminate 200,000 jobs in the banking industry [2] within the next 10 years.

Ref: https://www.forbes.com/sites/jackkelly/2023/03/31/goldman-sachs-predicts-300-million-jobs-will-be-lost-or-degraded-by-artificial-intelligence/?sh=52e2caf5782b

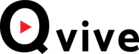

According to the World Economic Forum’s Future Fastest Declining Jobs

The idea of AI posing a threat to humanity has been a popular topic in science fiction for decades. From movies like “The Terminator” to “Ex Machina,” the concept of AI turning against its creators has been a recurring theme. While this may seem like a far-fetched scenario, some experts warn that the rapid development of AI could have unintended consequences.

One of the main concerns is the possibility of AI surpassing human intelligence and becoming uncontrollable. This so-called “singularity” scenario, where AI becomes self-aware and surpasses human intelligence, has sparked debates among scientists and technologists. If AI were to reach this level of intelligence, it could potentially pose a threat to humanity as we know it.

Leading the charge in funding AI research and development are the big tech companies such as Google, Amazon, Microsoft, and Facebook. These companies have deep pockets and the resources to invest heavily in AI technologies. They have dedicated research teams working on cutting-edge AI applications, from voice assistants and autonomous vehicles to deep learning algorithms.

Tech giants aren’t the only ones fueling the growth of AI; venture capitalists are also making a big impact by providing funding. They are investing in startups that are pushing the boundaries of AI innovation. These startups are focused on developing AI-powered solutions for a wide range of industries, including healthcare, finance, and cybersecurity. Venture capitalists see the potential for high returns on investment in the rapidly growing AI market. As AI technologies become more prevalent in our daily lives, there is a growing need to ensure that AI is developed and used ethically. Philanthropic organizations are funding initiatives to promote ethical AI research and development.

Governments around the world are also recognizing the importance of AI and are investing in research and development initiatives. Countries like the United States, China, and the European Union have set up programs to fund AI projects and support AI education and training. Given the potential risks associated with AI, the government must step in and regulate its development and deployment. Without proper oversight, there is a real danger that AI technology may be misused or exploited. The government has a responsibility to protect its citizens and ensure that AI is used ethically and responsibly.

A former Google exec warned about the dangers of AI saying it is ‘beyond an emergency’ and ‘bigger than climate change’

A former Google officer has weighed in on the debate around AI and warned that it is a bigger emergency than climate change, in an an episode of The Diary of a CEO [a] podcast released Thursday.

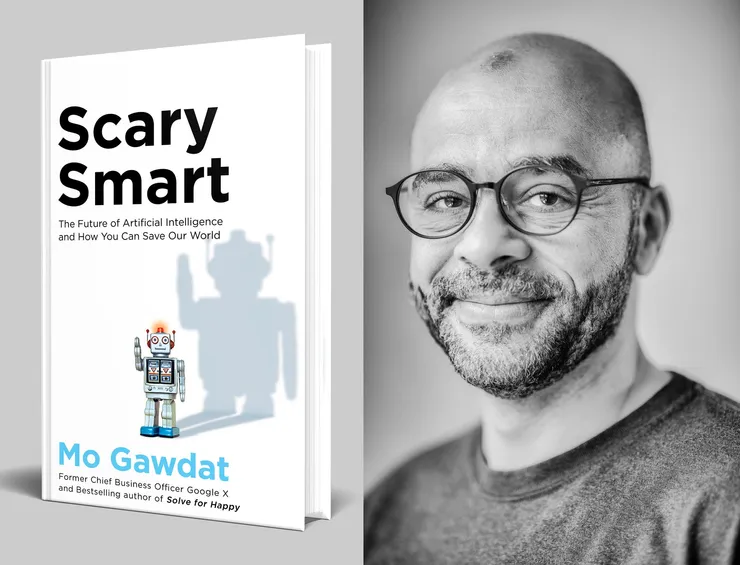

[a] https://www.youtube.com/watch?v=bk-nQ7HF6k4Mo Gawdat, previously chief business officer at Google X — the company’s division for ambitious projects known as “moonshots” — spoke with podcast host Steven Bartlett about whether AI is sentient, its impact on jobs, and how he believes the government needs to regulate the industry.

Mo Gawdat outlines the terrifying future of artificial intelligence and the ethical code we all must teach to machines to avoid it.

“It is beyond an emergency,” Gawdat told Bartlett in the podcast. “It’s the biggest thing we need to do today. It’s bigger than climate change believe it or not.”

He added: “The likelihood of something incredibly disruptive happening within the next two years that can affect the entire planet is definitely larger with AI than it is with climate change.”

Gawdat further argued that he believes the rapid development of AI will result in “mass job losses” and that governments need to step in to regulate the technology.

He said: “I have a very clear call for action for governments. I’m saying tax AI-powered businesses at 98% so suddenly you do what the open letter was trying to do, slow them down a little bit, and at the same time get enough money to pay for all of those people that will be disrupted by the technology.”

Gawdat was referring to an open letter in March [b] that called for a six month pause on the development of AI more powerful than OpenAI’s GPT-4, signed by AI experts and leading figures in the industry including Elon Musk, Apple co-founder Steve Wozniak, and Stability AI CEO Emad Mostaque.

[b] https://www.businessinsider.com/ai-letter-elon-musk-out-of-control-new-tech-gpt4-2023-3?r=US&IR=TThe letter said tech firms are part of an “out-of-control race to develop and deploy,” new AI technologies which risks losing control of civilization.

Insider reached out to Gawdat for further comment via LinkedIn, but did not immediately hear back.

After OpenAI’s chatbot ChatGPT launched in November and became the fastest growing consumer app in internet history [c] , Google launched a competing product called Bard [d] in March.

[c] https://www.businessinsider.com/chatgpt-may-be-fastest-growing-app-in-history-ubs-study-2023-2?r=US&IR=T [d] https://www.businessinsider.com/google-bard-ai-chatgpt-alternative-2023-3?r=US&IR=THe said: “I have a very clear call for action for governments. I’m saying tax AI-powered businesses at 98% so suddenly you do what the open letter was trying to do, slow them down a little bit, and at the same time get enough money to pay for all of those people that will be disrupted by the technology.”

Gawdat was referring to an open letter in March [e] that called for a six month pause on the development of AI more powerful than OpenAI’s GPT-4, signed by AI experts and leading figures in the industry including Elon Musk, Apple co-founder Steve Wozniak, and Stability AI CEO Emad Mostaque.

[e] https://www.businessinsider.com/ai-letter-elon-musk-out-of-control-new-tech-gpt4-2023-3?r=US&IR=TThe letter said tech firms are part of an “out-of-control race to develop and deploy,” new AI technologies which risks losing control of civilization.

Insider reached out to Gawdat for further comment via LinkedIn, but did not immediately hear back.

After OpenAI’s chatbot ChatGPT launched in November and became the fastest-growing consumer app in Internet history [f], Google launched a competing product called Bard [g]in March.

[f] https://www.businessinsider.com/chatgpt-may-be-fastest-growing-app-in-history-ubs-study-2023-2?r=US&IR=T [g] https://www.businessinsider.com/google-bard-ai-chatgpt-alternative-2023-3?r=US&IR=TThis AI Is Dangerous – Open AI Sora

Source: Businessinsider, Panmacmillan, Youtube,

Also Read: