Artificial intelligence (AI) applications are everywhere, from big data analytics and military equipment to facial recognition software and self-driving cars. And they bring new challenges and opportunities to the semiconductor industry every day.

As a reminder, AI describes a machine or software application’s ability to reason, learn, and act in a manner similar to human cognition. In essence, AI makes it possible for machines to think.

The beginnings of AI date back to the 1950s, but recent advances in AI technology have seen a renaissance in the field. The development of machine-learning algorithms capable of processing massive amounts of data has opened new possibilities for AI devices. Today’s AI applications can not only process data but also learn from experience and apply that experience to improve how they function.

With AI applications gaining traction in the industrial, retail, health care, military, research, and consumer sectors, demand for specialized sensors, integrated circuits, improved memory, and enhanced processors is increasing. And this demand is changing the semiconductor supply chain by directly impacting design and manufacturing decisions.

How will AI affect semiconductor design and production?

AI demands will have lasting impacts on semiconductor design and production. In large part, this is because the amount of data processed and stored by AI applications is massive.

Semiconductor architectural improvements are needed to address data use in AI-integrated circuits. Improvements in semiconductor design for AI will be less about improving overall performance and more about speeding the movement of data in and out of memory with increased power and more efficient memory systems.

One option is the design of chips for AI neural networks that perform like human brain synapses. Instead of sending constant signals, such chips would “fire” and send data only when needed.

Nonvolatile memory may also see more use in AI-related semiconductor designs. Nonvolatile memory can hold saved data without power. Combining nonvolatile memory on chips with processing logic would make “system on a chip” processors possible, which could meet the demands of AI algorithms.

While semiconductor design improvements are emerging to meet the data demands of AI applications, they pose potential production challenges. As a result of memory needs, AI chips today are quite large. With this large chip size, it is not economically easy for a chip vendor to make money while working on a specialized hardware. This is because it is very costly to manufacture a specialized AI chip for every application.

A general-purpose AI platform would help address this challenge. System and chip vendors would still be able to augment the general-purpose platform with accelerators, sensors, and inputs/outputs. This would allow manufacturers to customize the platform for the different workload requirements of any application while also saving on costs. An additional benefit of a general-purpose AI platform is that it can facilitate faster evolution of an application ecosystem.

From a production standpoint, the semiconductor industry will also itself benefit from AI adoption. AI will be present at all process points, proving the data needed to reduce material losses, improve production efficiency, and reduce production times.

Why semiconductor companies must define their AI strategy

The semiconductor market [a] has, for most of the last decade, seen much of its profits tied to the smartphone and mobile device market. As the smartphone market begins to plateau, the semiconductor industry must find other growth opportunities.

[a] https://irds.ieee.org/home/what-is-beyond-cmosAI applications, especially in the big data, autonomous vehicles, and industrial robotics industries, can provide those opportunities. By defining and then putting together their AI strategies now, semiconductor manufacturers can position themselves to take full advantage of the spreading AI market.

[b] https://advisory.kpmg.us/articles/2019/ai-transforming-enterprise.htmlHow will AI affect the semiconductor market?

AI offers semiconductor companies the chance to get the most value from the technology stack, the collection of hardware and services used to run applications. In the software-dependent world of PCs and mobile devices, the semiconductor industry is only able to capture 20 to 30 percent of the total value of the PC stack and as little as 10 to 20 percent of the mobile market.

Within the AI sector, the technology stack requires more hardware, especially in the fields of memory and sensors. This may allow the semiconductor market to control 40 to 50 percent of the total value of the stack, according to the Redline Group [c].

In addition, many AI applications will require specialized end-to-end solutions, which will necessitate changes to the semiconductor supply chain. Semiconductor companies—especially smaller companies producing niche products for the automotive and IoT industries—will be able to capitalize on markets by providing customized microvertical solutions addressing customer pain points related to storage, memory, and specialized computing needs.

How does AI change the demand for semiconductor chips?

The global AI market is forecast to grow to $390.9 billion by 2025 [d], representing a compound annual growth rate of 55.6 percent over that short period. Hardware lies at the foundation of each AI application.

[d] https://www.grandviewresearch.com/press-release/global-artificial-intelligence-ai-marketStorage will see the highest growth, but the semiconductor industry will reap the most profit by supplying computing, memory, and networking solutions. Demand for semiconductor chips will mirror the rapid ascent of the AI market.

Top semiconductor leaders in the industry

Semiconductors create economic tension because they represent a multibillion-dollar industry. While the US has historically maintained leadership in the semiconductor industry, more competitors have risen in other countries. Go deeper on the leading companies in semiconductors [d].

[d] https://irds.ieee.org/topics/semiconductor-leadersUS leads market share of semiconductors

According to the Semiconductor Industry Association (SIA) [e], the United States owns 46 percent of the market share for global sales of semiconductors. The following companies represent the top five semiconductor industry leaders, in order of market share:

- Intel Corporation ($241.88 billion)

- Samsung Corporation ($221.6 billion)

- NVIDIA Corporation ($152.88 billion)

- Texas Instruments Incorporated ($113.83 billion)

- Broadcom Inc. ($108.13 billion)

Of these companies, Samsung is headquartered in South Korea while the rest reside in the US.

[e] https://www.semiconductors.org/2020-state-of-the-u-s-semiconductor-industry/While currently the US maintains its industry dominance, experts eye China [e] as the next large competitor. Semiconductor sales and marketing teams should prepare accordingly to deal with this rising contender.

[e] https://spectrum.ieee.org/view-from-the-valley/semiconductors/devices/semiconductor-industry-veterans-keep-wary-eyes-on-chinaWhat resources are essential to the American semiconductor industry?

The list of materials needed for semiconductor manufacturing is impressively long. Relatively common materials include the following:

- aluminum

- boron

- copper

- germanium

- gold

- lead

- phosphorus

- silicon

- silver

- tin

In addition, semiconductor manufacturing requires more esoteric materials such as gallium arsenide, platinum silicide, titanium silicide, and titanium tungsten. Some of the most important resources needed in the industry, however, are rare earth metals.

Often used in high-κ dielectrics and chemical-mechanical planarization (CMP) slurries, rare earth metals are a set of seventeen elements that have similar chemical properties. Examples include scandium, lanthanum, neodymium, dysprosium, and promethium.

Despite their name, rare earth metals are not especially rare: they are distributed fairly evenly over the earth’s surface. The relative lack of large rare earth deposits makes them difficult to mine and process, however. Few nations have made concerted efforts to access their rare earth resources, with the notable exception of China.

China currently mines and processes approximately 85 percent of the world’s supply of rare earth metals, including neodymium and dysprosium, used in electric vehicle motors. It would take the rest of the world decades to build the infrastructure needed to match China in terms of rare earth-metal output.

As such, China can exert influence on the price of rare earth metals or even choose to withhold them from rival nations during trade disputes. This last tactic has the American semiconductor industry concerned, given the existing trade tensions between the two countries. A moratorium on exports of rare earth metals to the US could have a serious impact on semiconductor sales. Realizing this, the US government has a stockpile of rare earth metals, and some companies are experimenting with alternative metals.

How AI technology provides opportunities for semiconductor companies

According to McKinsey [f], AI accelerator chips (chips designed to work with neural networks and machine learning) will see a growth rate of approximately 18 percent annually—five times greater than that seen for semiconductors used in non-AI applications. Areas of high growth will include AI chips for autonomous vehicles and in the broader field of neural networks.

[f] https://www.mckinsey.com/~/media/McKinsey/Industries/Semiconductors/Our%20Insights/Artificial%20intelligence%20hardware%20New%20opportunities%20for%20semiconductor%20companies/Artificial-intelligence-hardware.ashxNeural networks are specialized AI algorithms based on the human brain. The networks are capable of interpreting sensory data and delivering patterns in large amounts of unstructured data. Neural networks find use in predictive analysis, facial recognition, targeted marketing, and self-driving cars. And they require AI accelerators and multiple inferencing chips, all of which the semiconductor industry will supply.

How can semiconductor companies benefit from AI technology?

AI adoption [g] holds the possibility for growth in the following areas of semiconductor manufacturing:

- Workload-specific AI accelerators

- Nonvolatile memory

- High-speed interconnected hardware

- High-bandwidth memory

- On-chip memory

- Storage

- Networking chips

Investing in research and development while building relationships with AI software providers will help chip manufacturers capture their share of these markets—if they can meet the coming demand.

Impact of artificial intelligence on the semiconductor industry

The immediate future of AI [h] has the potential to put strain on the industry supply chain unless semiconductor manufacturers plan to meet demand now. At the same time, the industry will itself benefit from AI, whose applications throughout the manufacturing process will improve efficiency while cutting costs.

[h] https://ieeexplore.ieee.org/document/8325446How will AI technology affect semiconductor production?

Just as other industries are embracing AI, so too is the semiconductor industry (PDF, 10 MB) [i]. AI expertise [j] coupled with high-performance computing will allow manufacturers to develop new efficiency benchmarks and increase output.

[i] https://www.pwc.com/gx/en/industries/tmt/publications/assets/pwc-semiconductor-report-2019.pdf [j] https://spectrum.ieee.org/tech-talk/robotics/artificial-intelligence/certified-artificial-rates-the-ai-expertise-of-thought-leaders-and-companiesOne of the key challenges to the semiconductor supply chain is chip production processing time. The time between initial processing and the final product takes weeks. And during this time, up to 30 percent of production costs is lost to testing and yield losses.

Embedding AI applications into the production cycle allows companies to systematically analyze losses at every stage of production so manufacturers can optimize operating processes. This ability will become even more valuable when working with next-generation semiconductor materials, which tend to be more expensive (and volatile) than traditional silicon.

How will AI technology affect the workforce in the semiconductor industry?

While the rise of AI brings many opportunities to the semiconductor industry, it also heralds a crisis in talent acquisition. The larger tech companies—most notably Google, Apple, Facebook, Amazon, and the like—are investing heavily in AI research, development, and implementation, especially in the arenas of big data analytics and deep learning.

This represents two challenges to chip makers. First, the major players in the AI industry increasingly develop their own hardware as this allows them to customize proprietary hardware to match their AI applications’ specific needs. This move toward in-house chip production, by extension, means the largest tech companies will purchase less from dedicated chip manufacturers.

Second—and this is where workforce considerations come into play—tech giants designing and manufacturing their own chips in house will need employees. With limited talent pools in both AI and the semiconductor industry, this will lead to talent shortages.

Future of semiconductors and artificial intelligence

Self-driving cars. High-performance computing. Quantum computing. AI makes what was science fiction at the turn of the century into reality. With these AI advances [k] come demands for new semiconductor technology and deep changes to the industry.

How is artificial intelligence expected to affect the semiconductor industry in the future?

To adapt to an industry increasingly dominated by the need for AI hardware, semiconductor manufacturers will need to provide industry-specific end-to-end solutions, innovation, and the development of new software ecosystems.

End-to-end services will require chip makers to work with partners to develop industry-specific AI hardware. While this may limit the semiconductor manufacturer to working with only certain industries, the alternative—the traditional production of general products—may not attract the same customers it does at present. An exception would be the production of cross-industry solutions that serve the needs of an interrelated group of industries.

With the production of specialized products comes the need to develop existing ecosystems with partners and software developers. The goal of such ecosystems is to develop relationships in which partners rely on and prefer the semiconductor company’s hardware. Semiconductor manufacturers will need to produce hardware that partners cannot find elsewhere at similar value. Such hardware—coupled with simple interfaces, dev kits, and excellent technical support—will help build long-lasting relationships with AI developers.

Innovation, as always, plays a role in the future of semiconductors. In addition to the ongoing efforts to circumvent the limitations of Moore’s Law, semiconductor research and development will need to consider how sensors, memory, and microprocessors enable and support emerging AI applications. Focusing on serving the needs of AI and the equally important IoT industry will help keep chip makers at the forefront of the industry.

What is driving the popularity of artificial intelligence in the semiconductor industry?

Demand from both the public and private sectors is driving the rapid development of AI—and as a result the importance of AI to the semiconductor industry. Of special note is the trend toward advanced driver assistance systems and electric vehicles. Even if the arrival of truly autonomous vehicles in large numbers remains years away, automotive AI [l] applications for monitoring engine performance, mileage, and driver habits are already here. Insurance companies are already using in-car AI apps to evaluate driving habits and determine premium rates.

[l] https://www.tractica.com/research/artificial-intelligence-for-automotive-applications/While the smartphone industry [m] is plateauing in terms of growth, the demand for embedded AI in mobile devices is growing. Phones use AI for navigation, for voice-to-text software, for facial recognition security, and for personal assistants. The advent of Alexa and other smart home hubs—and their ability to be controlled from afar by phone apps—represents another growth area for AI.

[m] https://www.mordorintelligence.com/industry-reports/smartphones-marketThen there are the uses for AI the general public is only tangentially aware of. City planners increasingly rely on AI to report on traffic volume, sewer usage, and infrastructure maintenance. Utility companies use AI to set electricity and water rates or to alert technicians to incidents or maintenance events.

Retail and online retail stores use AI to predict consumer needs and preferences—with what some see as alarming precision. Similar software is used by major social network platforms when choosing content and ads for individual users. AI has applications in health care, bioscience, industry, government, and the military—anywhere where large amounts of data need to be processed quickly, analyzed, and acted upon.

How semiconductors and artificial intelligence will work together in future applications

As an industry, AI has grown rapidly since its initial development in the 1950s. Going forward, semiconductor and AI technology will need to evolve in tandem to reach maximum profitability.

Experts predict the AI market will reach $733.7 billion [n] in worth by 2027. Needless to say, this growth will increase demand for integrated circuits, processors, and improved sensors. All of these are dependent on semiconductor technology. Go deeper on semiconductors and artificial intelligence.

Artificial intelligence’s growth and impact on the semiconductor sector

Because AI essentially allows computers to “think” and “learn,” semiconductor technology needs to adapt for these unique considerations. Instead of prioritizing speed and power, semiconductor manufacturers must shift their focus to efficiency.

Already, researchers have created chips that mimic human synapses, firing only when needed instead of constantly remaining “on.” In addition, nonvolatile memory technology allows data storage even when turned off. Combining this with processing logic will allow chips to adapt to AI demands.

However, current AI chips are quite large and costly. To make AI products practical for everyday consumers, more innovation must occur in this area.

AI industries impacted by semiconductor technology

Looking toward the future, AI will inevitably change the way our world works. As it stands, AI researchers and developers already have begun to disrupt the following markets:

- Automotive

- Financial services

- Healthcare

- Media

- Retail

- Industrial

- Construction

- Agriculture

In conjunction with IoT, artificial intelligence will only increase demand and innovation in the semiconductor sector.

Why must the semiconductor industry embrace artificial intelligence?

AI has negative uses as well as positive—the Cambridge Analytica (PDF, 423 KB) [o] scandal proved how powerful a tool AI can be when used to identify and manipulate people’s behavior and opinions. Like so many technologies, AI is a double-edged sword. One thing it is not is temporary. AI applications are here to stay and will only become more commonplace and complicated with time. As every AI task needs to be founded on reliable hardware, the semiconductor industry has a vested interest in seeing AI succeed.

[o] https://faculty.washington.edu/aragon/pubs/LA_WEB_Paper.pdfRead more about semiconductors and artificial intelligence in the IRDS™ Roadmap

Which American semiconductor companies work with the government and military?

Silicon Valley and the US military have ties reaching back to WWII. Over the years many semiconductor companies have acted as defense contractors. At present, key players include the following:

- Airbus Group

- Altera Corporation (an Intel subsidiary)

- BAE Systems

- General Dynamics

- Infineon Technologies

- Lockheed Martin

- Microsemi

- Northrop Grumman

- ON Semiconductor

- Raytheon

- Texas Instruments

- XILINX

How do semiconductor manufacturers compete for government and military contracts?

Semiconductor manufacturers interested in becoming US defense contractors must bid on contracts offered by the Department of Defense, which awards hundreds of billions of dollars in contracts each year. The size of the bidding company is not always an issue: the DOD regularly awards contracts to smaller firms offering niche products.

To begin the process, a semiconductor company must first obtain a Data Universal Numbering System number and register with the System for Award Management. Registration is required for all companies planning to bid on defense contracts.

Networking is as vital for defense contractors as it is for any other business enterprise. Companies should have a working knowledge of federal codes and ideally forge relations with entities who have worked for the government in the past. Collaboration between semiconductor companies and other fields within the aerospace and military sector is common.

Prior to submitting a proposal, become familiar with the military standards required by the DOD through sources such as EverySpec [p], government handbooks, and publications. Pay special attention to the MIL-STD-810G [q], the military’s standard equipment-testing procedures, as well as the marking, labeling, and identification marking standards set out in MIL-STD-129R [r] and MIL-STD-130 [s] (PDF, 421 KB).

[p] http://everyspec.com/ [q] http://everyspec.com/MIL-STD/MIL-STD-0800-0899/MIL-STD-810G_12306/ [r] https://www.dla.mil/Portals/104/Documents/LandAndMaritime/V/VS/Packaging/LM_MILSTD129R_151007.pdf [s] https://www.acq.osd.mil/dpap/UID/attachments/mil-std-130m-20051202.pdfFinally, the company presents its proposal and bid to the DOD. The process is complex, and due to the sensitive nature of the aerospace and military sectors, extremely vigorous. For a well-positioned semiconductor company, however, a defense contract can open doors to long-lasting partnerships with government and military interests.

Additional Information:

From Moore’s Law to NTRS to ITRS to IRDS™

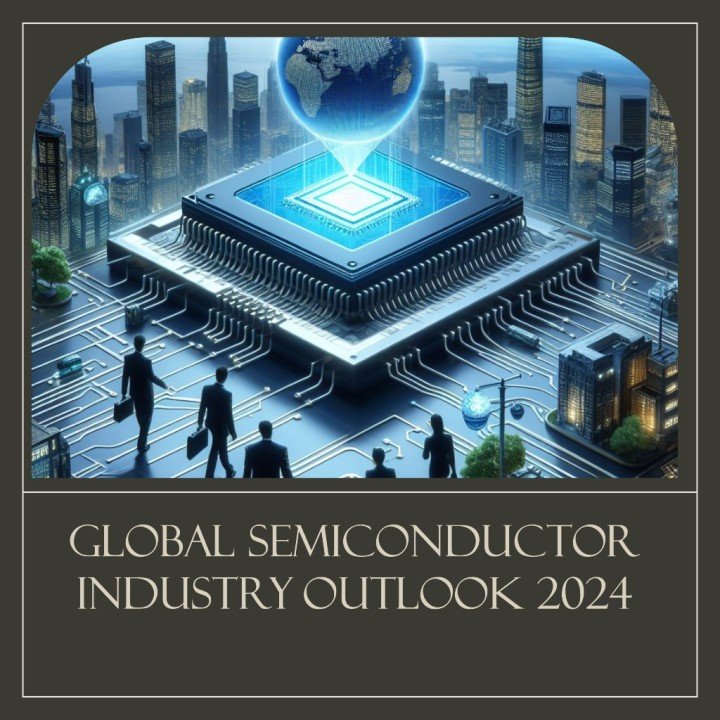

The semiconductor industry has a unique distinction among all the industries of having begun with a roadmap. In most cases an industry roadmap is initiated only when that industry requires a major revolutionary revision that departs from the way such industry had operated for decades.

In 1965 Gordon Moore mapped the future of the semiconductor industry before such an industry even existed (Electronics, Volume 38, Number 8, April 19, 1965). The semiconductor industry grew and diversified in the subsequent decades and it was not until 1991 that the US semiconductor community decided to formulate a 200-page document detailing all the aspects of the industry. In the meantime the semiconductor community had expanded to other regions and therefore it became clear that a broader world-wide approach to roadmapping needed to be taken.

The ITRS (or International Technology Roadmap for Semiconductors) was produced annually by a team of semiconductor industry experts from Europe, Japan, Korea, Taiwan and the US between 1998 and 2015. Its primary purpose was to serve as the main reference into the future for university, consortia, and industry researchers to stimulate innovation in various areas of technology.

A handful of these areas include:

- Process integration, devices, and structures

- System drivers and design

- Factory integration

- Microelectromechanical systems

- Emerging research devices

- Emerging research materials

- IC interconnects

In 2005 the ITRS published the first white paper where the terms “More than Moore” (MtM) and “More Moore” (MM) were introduced for the very first time.

This announcement heralded the introduction of the iPhone and the iPad in the following years. Therefore, in December 2012, during the annual meeting held in Taiwan, the ITRS decided to reorganize in order to address the renewed ecosystem of the microelectronics industry.

To accomplish this task the International Roadmap Committee (IRC) developed in the 2013-2014 timeframe and identified seven International Focus Teams (IFT) and renamed the roadmap as ITRS 2.0:

- System Integration

- Outside System Connectivity

- Heterogeneous Integration

- More the Moore

- Beyond CMOS

- More Moore

- Factory Integration

All IFTs included all the elements of the ITRS plus many new elements, such as heterogeneous integration, wireless connectivity, etc.

International Roadmap for Devices and Systems™

What is the IRDS™?

The IRDS™ is a set of predictions that serves as the successor to the ITRS. The intent is to provide a clear outline to simplify academic, manufacturing, supply, and research coordination regarding the development of electronic devices and systems.

The goals of the roadmap are as follows:

- To identify key trends related to devices, systems, and all related technologies by generating a roadmap with a 15-year horizon

- To determine generic devices’ and systems’ needs, challenges, potential solutions, and opportunities for innovation

- To encourage related activities worldwide through collaborative events, such as related IEEE conferences and roadmap workshops

The shift and evolution of the roadmap from the ITRS to the IRDS™ has translated to an expanded focus on systems. Emphasis has been placed on architectures and applications that deviate from the traditional paradigm of device->circuit->logic gate->functional block->system.

What other technologies are within the IRDS™?

The 2021 IRDS Update is the revision of most chapters of the 2020 International Roadmap for Devices and Systems™, which is the culmination of predicting the next fifteen years’ work in analyzing and predicting present and future technological needs. The roadmap encompasses an immense scope of the electronics industry—particularly the semiconductor and computer industries; everything from applications needs down through device and manufacturing requirements are drilled down in the roadmap.

The 2022 IRDS Edition is a full edition year. It includes newly added topics as white papers for Autonomous Machine Computing and Mass Data Storage. Additionally, a white paper addressing the most current challenges for Environment, Safety, Health and Sustainability (ESH/S) will be presented in 2023. Key ESH/S white paper elements will include, for example, supply chain constraints, materials technology versus regulatory Challenges, water/energy/wastewater, and product content/ecology and circularity.

As can been seen, the IRDS™ is far more than Beyond CMOS as it addresses the integrated and complexity of devices and systems. There are a number of critical domains and technologies the IRDS™ includes in great detail. These include system attributes for cloud computing, cyber-physical systems, mobile technologies, and Internet of Things (IoT). Beyond CMOS is just one aspect in the evolution of computing.

Ref: https://irds.ieee.org

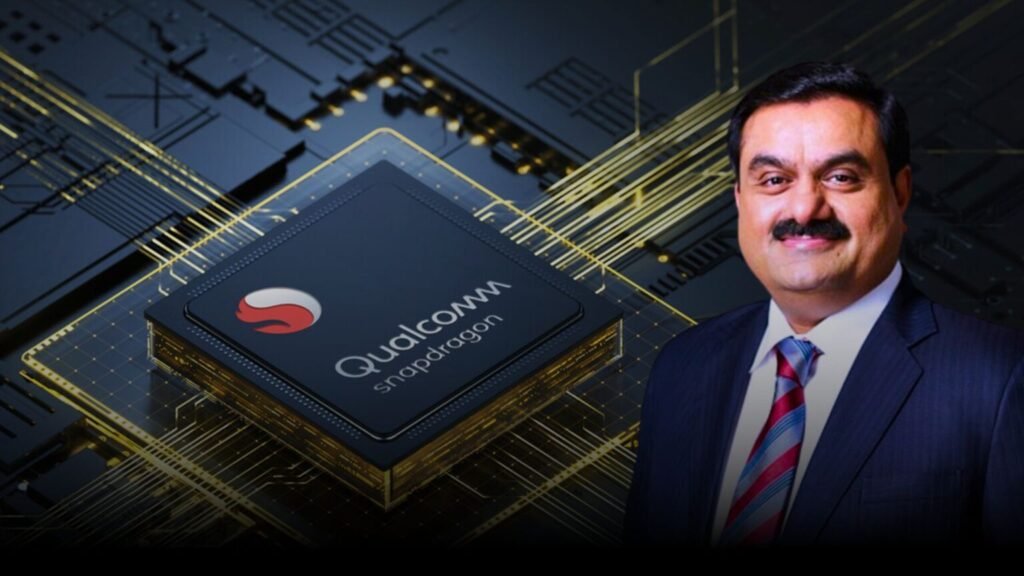

Gautam Adani Expresses Interest in Qualcomm’s Ambitious Plans in Semiconductors and AI

11 March 2024 – Indian business tycoon Gautam Adani has shown keen interest in the expansive vision and plans of chip giant Qualcomm, particularly in the domains of semiconductors, artificial intelligence (AI), and emerging technologies. Adani, the head of the Adani Group, shared insights from his meeting with Qualcomm CEO Cristiano R Amon, expressing enthusiasm for the multinational corporation’s groundbreaking work.

Ref: https://thekarostartup.com/gautam-adani-expresses-interest-in-qualcomms/

Biden meets with Intel’s CEO and other execs in push to revive domestic chip industry

Ref: https://www.oregonlive.com/silicon-forest/2021/04/biden-meets-with-intels-ceo-and-other-execs-in-push-to-revive-domestic-semiconductor-industry.html

Biden signs $280B CHIPS act in bid to boost US over China

Ref: https://www.newsandsentinel.com/news/2022/08/biden-signs-280b-chips-act-in-bid-to-boost-us-over-china/

Source: Netscribes-Image, BT-Images, Linkedin

Also Read: